- Python Selenium Chrome Web Scraping Data Type

- Python Selenium Chrome Web Scraping Tutorial

- Python Selenium Chrome Web Scraping Extension

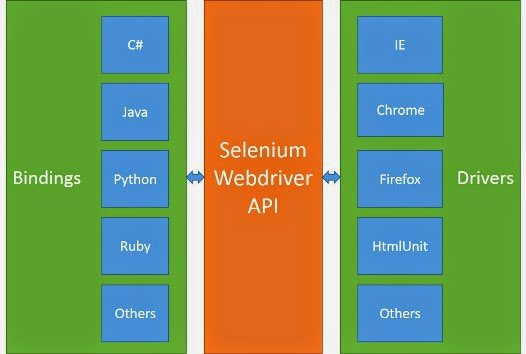

Selenium is a widely used tool for web automation. It comes in handy for automating website tests or helping with web scraping, especially for sites that require javascript to be executed. In this article, I will show you how to get up to speed with Selenium using Python.

What is Selenium?

- Selenium is a great tool for web scraping, especially when learning the basics. But, depending on your goals, it is sometimes easier to choose an already-built tool that does web scraping for you. Building your own scraper is a long and resource-costly procedure that might not be worth the time and effort.

- Web scraping is becoming more and more central to the jobs of developers as the open web continues to grow. In this article, I’ll be explaining how and why web scraping methods are used in the data gathering process, with easy to follow examples using Python 3.

- Selenium is an open-source web-based automation tool. Selenium primarily used for testing in the industry but It can also be used for web scraping. We’ll use the Chrome browser but you can try on any browser, It’s almost the same.

Selenium’s mission is simple, its purpose is to automate web browsers. If you are in need to always execute the same task on a website. It can be automated with Selenium. This is especially the case when you carry out routine web administration tasks but also when you need to test a website. You can automate it all with Selenium.

Python provides different libraries for web scraping such as Scrapy, Requests, Beautiful Soup 4, lxml and Py Selenium. Therefore, I thought why not an article about Python Web Scraping using Selenium. Above all, let’s start with our awesome beginners tutorial for “Python Selenium for Web Scraping“.

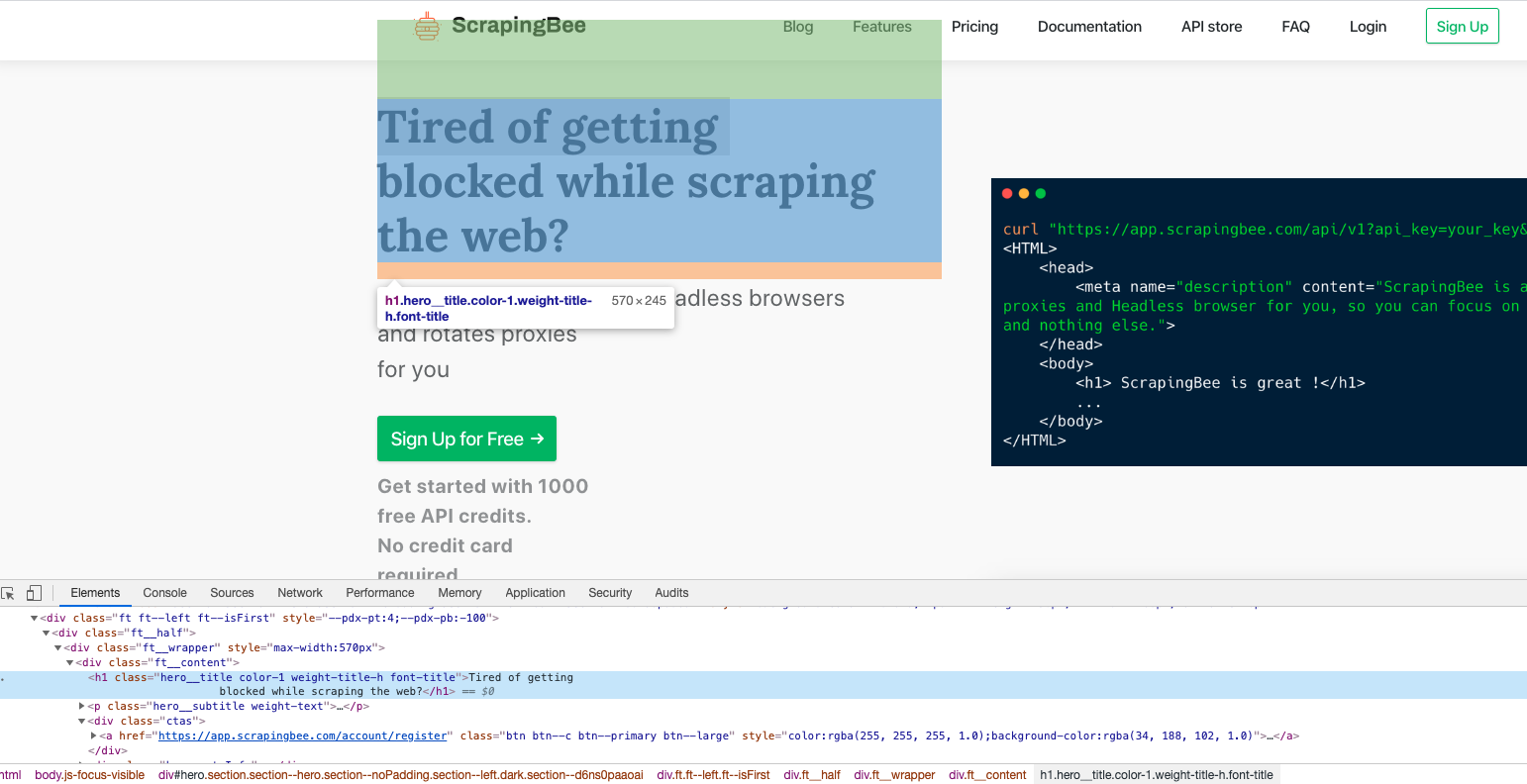

With this simple goal, Selenium can be used for many different purposes. For instance web-scraping. Many websites run client-side scripts to present data in an asynchronous way. This can cause issues when you are trying to scrape sites in which data you need is rendered through javascript. Selenium comes to the rescue here by automating the browser to visit the site and run the client-side scripts giving you the required HTML. If you would simply use the python requests package to get HTML from a site that runs client-side code, the rendered HTML won’t be complete.

There are many other cases for using Selenium. In the meantime let’s get to using Selenium with Python.

Python Install Selenium

Before you begin you need to download the driver for your particular browser. This article is written using chrome. Head on to the following URL to download the chrome driver to use with selenium by clicking here.

The next step is to install the necessary Selenium python packages to your environment. It can be done using the following pip command:

Selenium 101

To begin using selenium, you need to instantiate a selenium webdriver. This class will then control the web browser and you can take various actions as if you were the one navigating the browser such as navigating to a URL or clicking on a button. Let’s see how to do that using python.

First, import the necessary modules and instantiate a selenium webdriver. You need to provide the path to the chromedriver.exe you downloaded earlier.

Python Selenium Chrome Web Scraping Data Type

After executing the command, a new browser window will open up specifying that it is being controlled by automated testing software.

In some cases, you get an error when chrome opens and needs to disable the extensions to remove the error message. To pass options to chrome when starting it, use the following code.

Now, let’s navigate to a specific URL, in our case that will be google’s homepage by executing the get function.

Locate, Enter a Value to TextBox

What do you do on google? You search! Let’s use selenium to perform an automated search on google. First, you need to learn how to locate items.

Selenium provides many options to do so. You can find web elements by ID, Name, Text and many others. Read on here to get the full list.

We will be locating the textbox by name. Google’s input textbox has a name of q. Let’s find this element with Selenium.

Once this element is found, enter your search to it. We will search for this site by executing the following method.

Lastly, send an “Enter” command as you would from your keyboard.

Wait for an Element to Load

As mentioned earlier, many times the page you are browsing to doesn’t completely load at first, rather it executes client-side code that takes longer to load and you need to wait for these to load before continuing. Selenium provides functionality to achieve this by using the WebDriverWait class. Let’s see how to do this.

TipRanks.com is a site that lets you see the track record and measured performance of any analyst or blogger you come across. We will browse to Apple’s analysis page which upon accessing runs javascript to generate the charts. Our code will wait until these are generated before continuing.

Python Selenium Chrome Web Scraping Tutorial

First, we need to import additional modules for our sample such as By, expected_conditions and the WebDriverWait class. ExpectedConditions provide functionality for common conditions that are frequently used when automating web browsers for example to detect the visibility of elements.

After accessing the page, we will wait for a max of 10 seconds until a specific CSS class becomes visible. We are looking for the span.fs-13 that becomes visible until charts complete loading.

Get Page HTML

Python Selenium Chrome Web Scraping Extension

Once the driver has loaded a page and its rendered completely, either by waiting for elements to load or just navigating to the page. You can extract the page’s rendered HTML quite easily with selenium. This can then be processed using BeautifulSoup or other packages to get information from them.

Run the following command to get the page HTML.

Conclusion

Selenium makes web automation very easy allowing you to perform advanced tasks by automating your web browser. We learned how to get Selenium ready to use with Python and its most important tasks such as navigating to a site, locating elements, entering information and waiting for items to load. Hope this article was helpful and stay tuned for more!